In today’s hyper-competitive mobile world there are two watchwords that should underpin all your online marketing initiatives – test and measure.

Yes, I am talking about the classic, powerful technique of split testing (commonly called A/B testing) when launching a new website, landing page, banner ad, or e-mail campaign, and then capturing and analyzing the pertinent metrics of each variation.

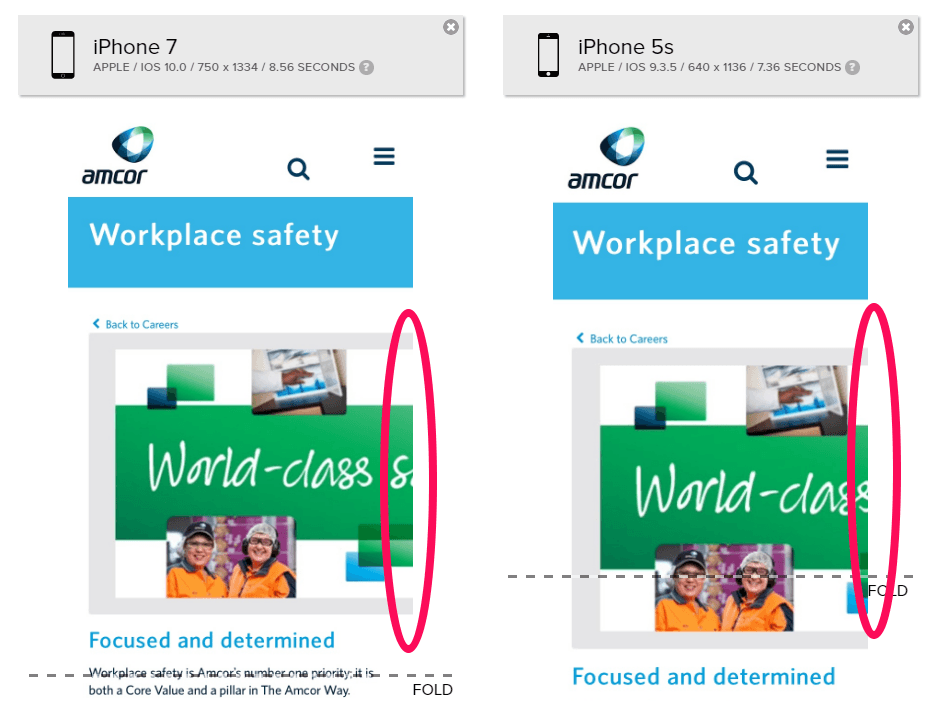

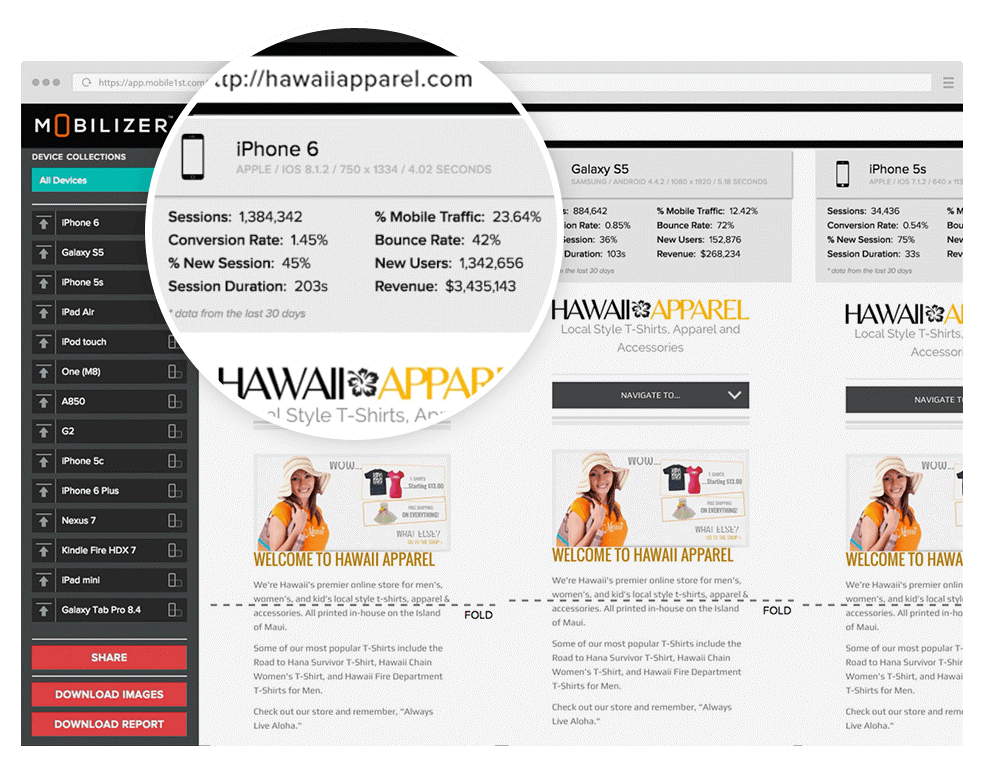

Naturally, as you modify and test design elements, images, or even the template or layout, it’s imperative to ensure accurate rendering occur across popular mobile devices. Your A/B tests must reflect the alterations you have deliberately selected, not some glitch in your mobile display.

Why Split Test?

Without gathering valuable data available through the commonly accepted split test practices, it’s impossible to gain the insights necessary to realize the best possible results. Only having the knowledge of what is working and why can enable you to improve your messaging and designs and maximize click-throughs, time spent on page, conversion rates, revenue, etc.

In a nutshell, A/B testing is the use of two or more variants for performance comparison. As in any endeavor that seeks to gather empirical evidence, it relies on the classical scientific method of modifying specific elements for purposes of comparison (The Experiment) in combination with precise observation and measurement (Assessing the Results). These initial experiments must be followed by the refinement of the variants to further test their efficacy and continue to optimize the website, landing page, banner ad, e-mail, etc.

To become familiar with the process quickly, it’s a good idea to define the parameters necessary to conduct effective split testing:

- Identify the crucial elements you can (and should) test

- Categorize the important metrics you’re measuring

- Stick to the generally accepted A/B testing process

The reasons most marketing pros are big believers in A/B testing are simple:

Efficiency: Spending resources, and especially money, foolishly (whether your own or your client’s) is careless and unforgivable. There’s a famous business adage: “I know I’m wasting half of the money I spend on my advertising. I just don’t know which half!” Split testing tells you definitively what is working and what is wasteful. As President Abraham Lincoln once famously said: “Online traffic is a terrible thing to waste.”

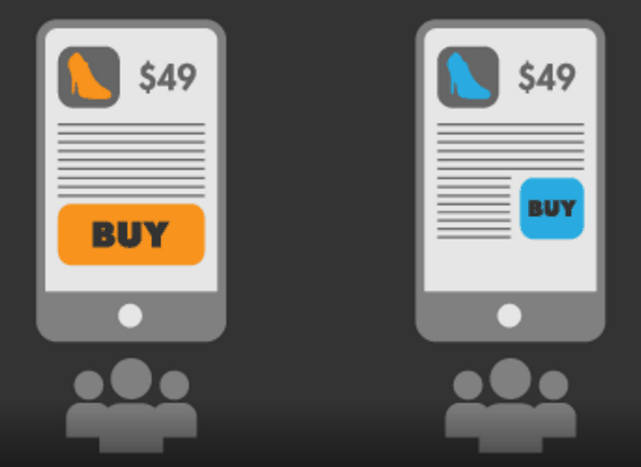

Performance: Testing ensures that your marketing is competitive. The other marketers in your category are almost certainly split testing and are learning how to trump your efforts every time they do. You can’t compete well if you go to a gunfight armed with a butter knife. Speaking of performance, by using Mobilizer to test rendering speed, you can see actual load times on each device, enabling you to focus on improving that factor, or conversely, eliminating it from the equation.

Professional Curiosity: The top achievers in any field take tremendous pride in learning how to continually improve their skill level and use their indisputable expertise to gain increasingly more desirable clients. Online marketers geek out on stuff like A/B testing and can’t imagine life without digging into the minutiae of their craft.

If you’ve used A/B testing before and already employ it in most of your marketing, then you’ve seen how it can improve the overall performance of your pay-per-click (PPC) campaign, your conversion rates and, ultimately, the ROI on the investment made by your company or client. However, if you’ve yet to make split testing a de facto part of the rollout of your design work and campaigns, you’ll be glad once you’ve taken the plunge, provided you keep some general rules in mind.

Mobile1st offers mobile optimization services to increase your mobile website’s revenue/conversion by decreasing your shopping cart abandonment rate, reducing your page load speed, improving mobile UX and SEO, analyzing your mobile analytics, and more. Our team of mobile experts analyzes and improves your website across 35+ factors, A/B tests ideas, and can even (optionally) implement the design/code changes.

Contact Mobile1st Experts Now!

Comparing Mobile Test Apple to Apples

Any effective experiment requires modifying one particular element to ensure that variant is truly responsible for the differing results. Ideally, you should test each element separately to avoid reading into test results factors you think are causational vs. those which turn out to be mere red herrings. For example, just change out the headline, or the primary image, not both at once.

Image courtesy of Marketizator

Image courtesy of Marketizator

This approach can often lead to surprising and enormously helpful insights. (For example, using the color red for call-to-action buttons is the best choice, despite our years of conditioning that red means STOP.) When you include Mobilizer tests in your testing regimen, you can be absolutely sure the design variations you’re testing are not being hindered by technical glitches. Incidentally, if you find it necessary to put several different versions in play at once, you should become familiar with the pros and cons of A/B/N testing (see below).

Mobile A/B Testing: Best Practice Variations

There are an endless number variations of which page elements should be tested and why some should be weighted with more importance than others. Our friends at OptiMonk published an excellent blog posting we highly recommend on this topic last year.

Among the key variants everyone will agree on are:

Your Headline – (David Ogilvy, the all-time master of great headline writing, has some sage advice for you.)

The Call to Action – Make your pitch simple, direct and compelling. People don’t have time for guessing games, boy!

Subheads – Highlight key points that move the conversation (and selling process) forward.

Pricing Copy – High price point, lowball bargain basement, impulse buy, terms of payment, no credit card required trial – the variations are endless.

Images – Hero photo, cartoon, whiteboard presentation – the sky’s the limit.

Multimedia – Video and sound can be very attention-getting, and a real annoyance.

Long Scroll Pages or Short – Trends in the design and marketing world come and go but sales revenue and the bank balance at the end of the month abide forever (choose your weapons carefully).

Every element in your marketing can work favorably in influencing visitors or blow up in your face. Without going overboard and turning your project into the Manhattan Project, test everything that contains pixels. For example, many marketers don’t consider performance results within the context of device. Suppose you knew that tablet users were converting into buyers at levels twice that of those using smartphones? Does having more real estate make your design and images work harder for you? By having side-by-side comparisons of your webpage captured on Mobilizer along with per-device mobile metrics, you can both see rendering problems and identify marketing opportunities that you would miss without seeing what happens on real devices.

What Exactly Are We Measuring?

The gathering of data is central to any scientific method but not necessarily helpful unless you know in advance what the important metrics are you want to capture and measure. Remember, the road you are on is a process which requires testing, measuring, refining, testing again, measuring the new variant, and so on. Just as test and measure should be the mantra of online marketing, modify and optimize are the watchwords of sound split testing programs.

What to measure to figure out if you’re hot or cold:

Bounce Rate: Attracting the attention of folks is fine, but the rubber really hits the road once they take the bait. Do they stick around or scram? Does their interest convert into meaningful action (filling out a form, downloading a marketing piece, making a purchase?) Unless you know what percentage of the traffic you’re getting takes action (converts), you’re blowing smoke. PPC rates are what make you the hero or the goat.

Number of Unique Visitors: You just put out a sign on the street that says “Free Beer!” Now what?

Time Spent on Page: Is your messaging and design interesting enough that you’ve mesmerized the overwrought, distracted masses to stick around?

Conversion Rate: Against the backdrop of today’s rapidly expanding global mobile audience, maximizing CRO is the payoff that measures the success of everything that leads up to it. Conversions in whatever form they take (sales, captured data, downloaded white paper) are almost always the goal of any online marketing so it’s the final arbitrator of your success (or failure).

Generally Accepted A/B Testing Practices

Is marketing a science or an art? It’s both! However, the aforementioned scientific method must take precedence over intuition. The correct way to run an A/B testing experiment is to follow as “scientific” or controlled a process as possible to minimize the effects of variables skewing your results – except for the specific variable (the independent variable) you want to test, of

Establish a Baseline

Start by reviewing your existing data (the metrics you’re already measuring as indicated above). By knowing your current performance on these factors, and especially conversion rates, you’ll know how much improvement a new headlines, image, or design has made. Check out the Google Analytic Content Experiment webpage and be sure to stick to their website testing & Google search guidelines so you won’t adversely affect your search rankings while you’re testing.

Test Just One Variable at a Time

While it may be tempting to try out different marketing approaches that may extend beyond just swapping a header, graphic or theme, going beyond single variation will simply muddy the water, leaving you confused over what really caused your web page’s improved performance. Be consistent across the entire user experience.

Define Your Objectives

Prior to running your test you should establish what goals you hope to achieve. Meeting your client’s or stakeholder’s expectations is more likely if you clarify in advance what you expect the outcome to be. If your metrics fall short, the flaws in the marketing approach will be more obvious and therefore easier to fix.

Review Your Data and Make Choices

Once your split test has run its course (at least to the point you’ve produced enough data to analyze), compile the results and decide on the best next steps to optimize what you’ve improved upon and where to further eliminate ineffectual content and design.

A Few Words about A/B/N Testing

In addition to employing the yin-yang of split testing with just two competing headlines, designs, or webpages, it can also be helpful to use multivariate testing, referred to as A/B/N testing. This process allows more than two, and as many as necessary, variations to be tested simultaneously. The “N” (as in nth) denotes that with A/B/N testing there is not a specified number of variates and that as many as necessary may be included in the test. By testing a spectrum of webpages, landing pages or other marketing device that are different in several ways or even entirely different, weak candidates can be weeded out and the best performing versions can form the basis for a whole new round of A/B testing.

This approach poses the risk of “mission creep” whereby too many elements in the testing are included. This inflated testing requires an increasing amount of traffic and can eat up more time and effort than planned. There are strong arguments for both approaches, and you’ll have to decide for yourself what makes the most sense on a case-by-case basis. Kept in check, however, A/B/N testing can provide excellent insights into general marketing messaging, design themes, and combinations of various elements in ways that would be hard to achieve in simple split testing.

Optimize Your Split Testing with Mobilizer

The possibilities to be gained by using split testing, A/B/N tests, Google’s Content Experiments, and Google Analytics testing are endless as you can see from browsing through the WhichTestWon collection of A/B and multivariate testing case studies.

- Mobilizer Public Access Discontinued - January 8, 2019

- Improve Mobile Conversion Rates with Email Remarketing - December 12, 2018

- We Recommend: mCRO with Monetate Test and Segment™ - August 27, 2018